Syncing a voiceover to lip movements in a video is one of those tasks that looks simple until you try to do it manually. Even a single-frame mismatch breaks the illusion. Fixing those frames in post-production takes hours, and for anyone producing explainers, localized content, or AI avatars at volume, that slowdown kills momentum.

AI now handles lip-syncing automatically. You upload an image and an audio file, and the model generates a synchronized talking-head video in minutes. This guide walks you through how to create a lip sync AI video using VEED's Fabric 1.0, the most accessible way to get started. We also cover the Lip Sync API for teams with more technical or high-volume needs.

Key takeaways:

- Use VEED Fabric 1.0 to create a lip sync AI video from a static image and an audio file, no existing footage required.

- Upload your character image, add your audio or voiceover, select your aspect ratio, and generate your video in under 10 minutes.

- VEED's Lip Sync API is a separate tool for developers who need to remap lips in existing video footage at scale.

- Clean audio and a clear character image are the two biggest factors in getting accurate, expressive lip sync output.

- After generating, use VEED's built-in editor to add subtitles, trim, and brand your video before publishing.

What is AI lip syncing?

AI lip syncing uses machine learning to automatically match spoken audio with a person's lip movements in a video. Instead of animating mouth shapes frame by frame, the AI analyzes the phonemes in your audio and maps them to corresponding mouth positions in real time.

Here's how the process works under the hood:

- The model converts your speech audio into visemes, which are the visual representations of individual phonemes.

- It maps those visemes to frame-level lip positions in either a static image or existing footage.

- The output is a synchronized video where mouth movement follows the audio naturally.

This works with avatars, uploaded character images, and pre-recorded video, depending on which tool you use.

How to lip sync videos with AI using VEED Fabric 1.0

The most straightforward way to create a lip sync AI video is with VEED Fabric 1.0. You bring a character image and an audio file. Fabric animates the character's lips to match your audio and outputs a ready-to-publish video. No existing footage needed, no animation background required.

Here's what you need before you start:

- A VEED account with Fabric 1.0 access

- A clear image of your character (photo, illustration, or brand mascot)

- Your audio: a recorded voiceover, uploaded audio file, or typed script for text-to-speech

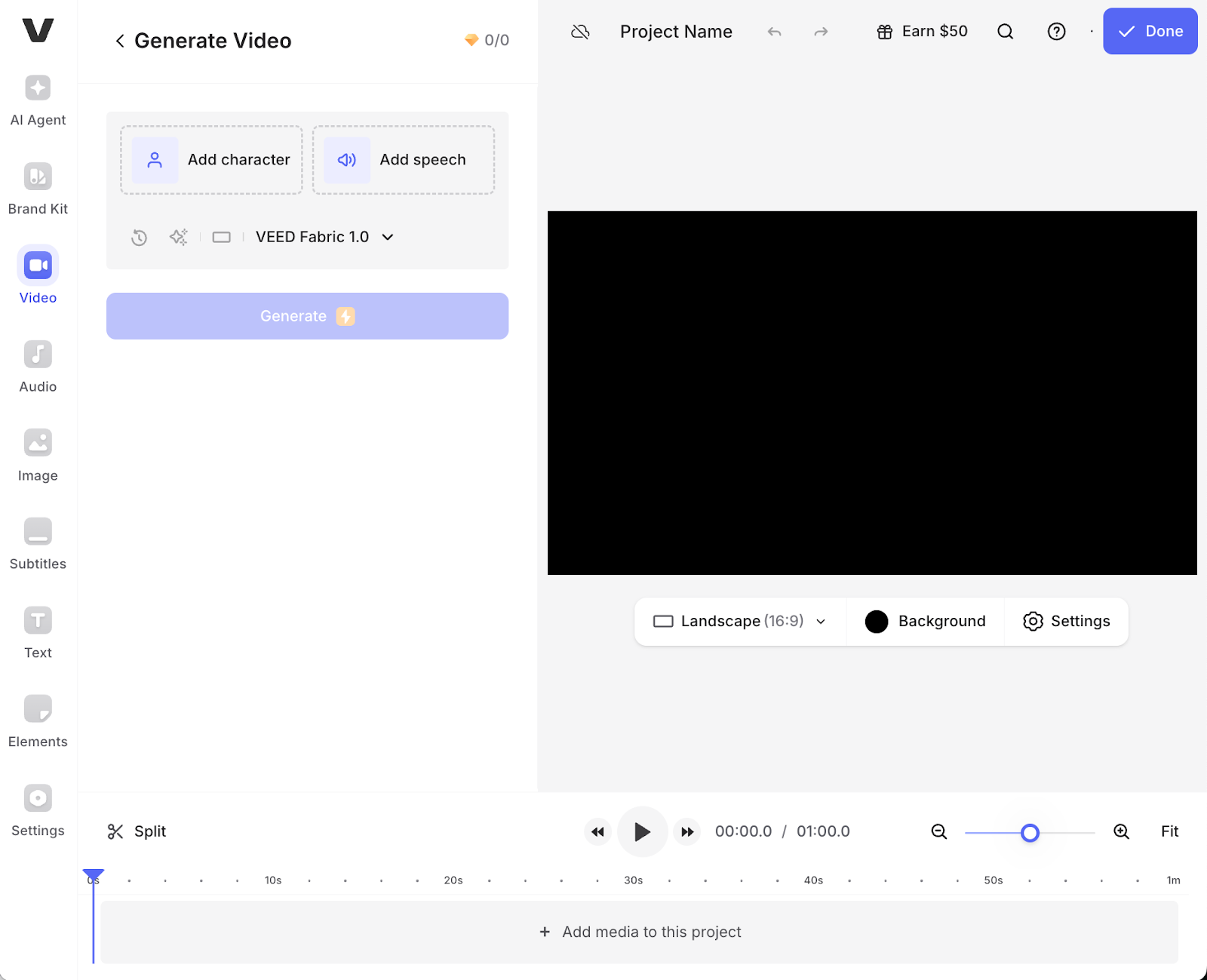

Step 1: Open VEED’s AI Playground and select Fabric 1.0

Open Fabric 1.0 from VEED. This tool lets you create lip-synced talking-head videos featuring your chosen character.

Step 2: Add your character

Upload an image of the character you want to animate (this could be a real person, artwork, or even a brand mascot).

Step 3: Add your audio

Upload your audio file or record directly in VEED. This could be a voice recording, narration track, or a podcast clip. If your script is text-only, you can use VEED's text-to-speech feature to generate the voice before uploading.

Step 4: Choose your desired aspect ratio and resolution

Select your desired aspect ratio and resolution. VEED supports all major formats optimized for different platforms:

- Instagram Stories and Reels: Vertical 9:16

- LinkedIn and Facebook: Square 1:1 or landscape 16:9

- TikTok: Vertical 9:16

- YouTube: Landscape 16:9

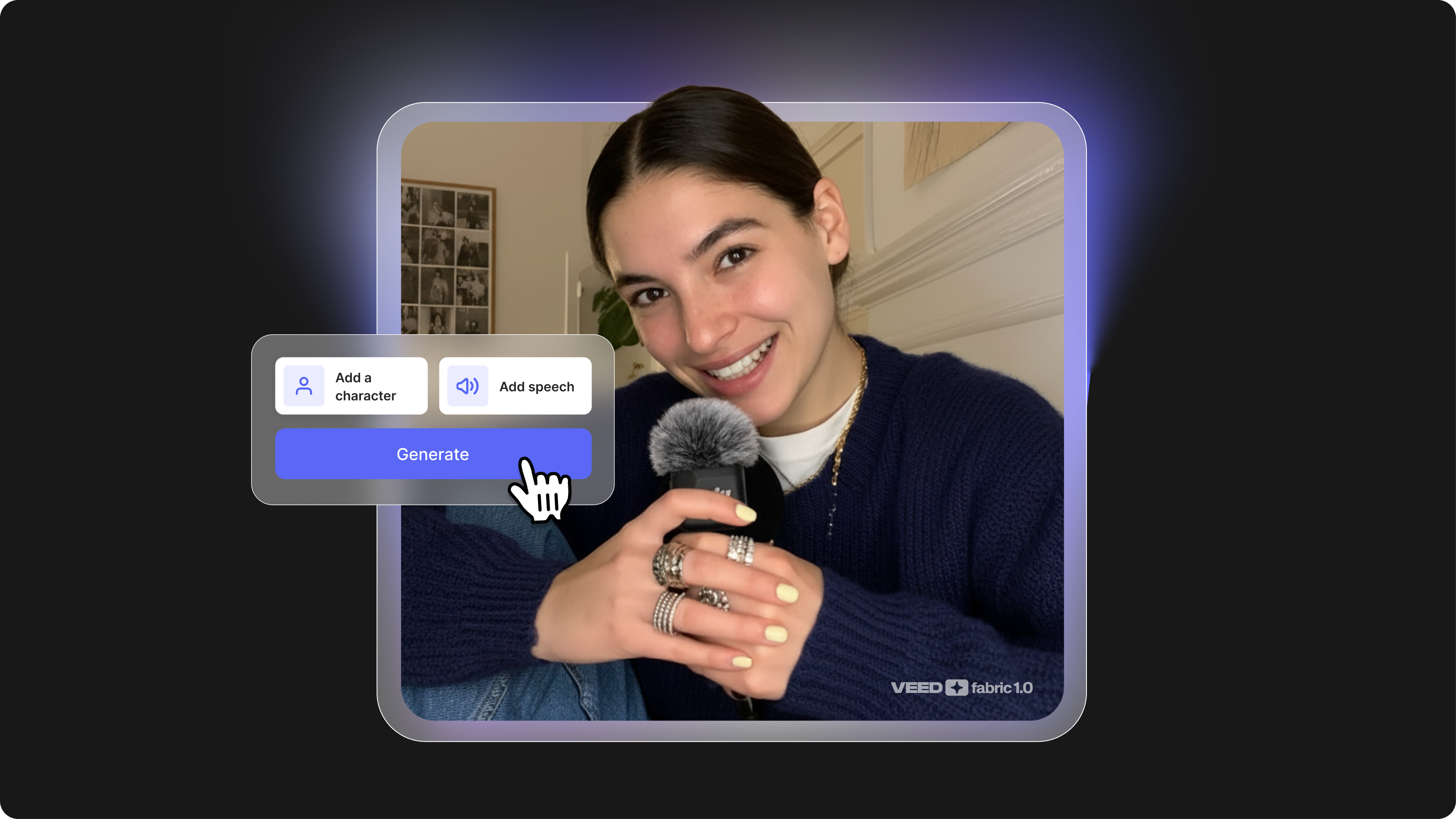

Step 5: Generate the AI lip sync video

Click "Generate" to create your talking video.

Fabric 1.0 synchronizes the character's lip movements with your audio or selected voiceover. The AI analyzes your audio's phonemes and maps them to realistic mouth shapes frame by frame.

Generation typically takes 5-10 minutes, depending on content length and complexity. Longer videos or highly detailed character styles may take slightly more time.

Step 6: Download or edit it further in the VEED editor

Once your video is generated, you can download it and share it. If you want to edit it further, you can import it into VEED's editor and add some final touches like:

- Captions or subtitles to boost engagement (80% of social video is watched without sound)

- Transitions between scenes for multi-section videos

- Background music to enhance emotional impact

- Branding elements like logos, color overlays, or text animations

VEED's editor is built for speed. Everything happens in your browser, so there’s no need for downloads or any complex software to learn.

Common challenges when learning how to lip sync

These are the issues that consistently trip up new users, based on how the AI processes your inputs.

Timeline expectations

Expect 5-10 minutes to generate, depending on content length and complexity. Simple avatars with short scripts generate faster; detailed characters with more extended dialogue take longer.

Best tools for lip-syncing videos with AI

The top tools for creating an AI lip sync video are VEED Fabric 1.0, VEED's Lip Sync API, and D-ID. Each serves a different need: Fabric 1.0 is built for creators generating talking-head content from scratch; the Lip Sync API is designed for developers syncing audio to existing footage at scale; and D-ID is a standalone platform for animating still photos.

We tested each platform based on:

- Input types supported (image, video, audio)

- Output quality and realism

- API accessibility and workflow integration

- Speed and scalability

- Use case alignment (marketing, dubbing, personalization)

1. VEED Fabric 1.0

Fabric 1.0 is VEED's AI lip-sync video model, designed to create longer, more expressive talking-head videos directly from your input character and voice recordings. VEED also lets users generate entirely custom characters in different styles, including realistic, claymation, and anime. It's a versatile, creator-friendly tool built specifically for social media storytelling and branding.

Use cases:

- Build brand spokespersons or animated explainers from scratch

- Run A/B tests on avatar styles for different platforms

- Generate TikTok, LinkedIn, or Instagram videos with personalized presenters

Pros:

- Custom character generation with no need for pre-set avatars. Create unique spokespersons that match your brand identity.

- Supports style transfer and emotional expression. Make your avatar happy, serious, enthusiastic, or contemplative based on your content needs.

- Seamlessly integrated with VEED's video editor for full workflow support. Generate, edit, caption, and export without switching tools.

Cons:

- Limited free usage in some regions (India, Pakistan, Bangladesh). Check availability in your area.

Best for: Marketers, educators, and creators seeking fast, flexible talking content with complete creative control

2. VEED Lip Sync API

VEED’s Lip Sync API is a developer-ready tool designed to automatically remap a speaker’s lips to match new audio in existing video footage. Ideal for dubbing, localization, video rephrasing, or creating dynamic AI avatars, it delivers natural lip movements synced precisely to speech. With just two inputs (video and audio), you get back a fully synced MP4 ready for publishing.

Use cases:

- Localize keynote talks and educational videos across languages

- Refresh old video ads with updated audio messaging without reshooting

- Deploy dynamic customer service avatars that respond in real time

- Auto lip sync large video libraries for multilingual markets

Pros:

- Lightning-fast processing at approximately 2-2.5 minutes per video minute. Scale to hundreds of videos without bottlenecks.

- Affordable at $0.40 per minute with no hidden fees. Transparent pricing makes budgeting simple.

- Handles multiple aspect ratios and video formats. Works seamlessly with landscape, portrait, and square videos.

- No training or complex setup required. Upload your files, hit sync, and download results.

Cons:

- Limited to audio replacement. Not designed for generating avatars from scratch—only for syncing existing footage.

- Requires clean input footage for best accuracy. Low-quality video or poor lighting can reduce sync quality.

Best for: Product teams, localization services, and developers looking to scale high-volume lip-synced content through API integration.

3. D-ID

D-ID is a creative AI platform that brings portraits to life through animated talking-head videos. It supports both UI and API access, enabling users to generate quick, engaging lip-synced videos from still photos using text-to-speech or voice recordings.

Use cases:

- Onboarding and training video creation

- Personalized video campaigns for sales or education

- Social media avatars or virtual presenters

- Quick lip sync video app prototypes

Pros

- Easy to use, even for non-technical users. Simple drag-and-drop interface gets you started in seconds.

- Realistic facial animations and support for multiple languages. Suitable for global campaigns.

- Fast rendering speeds. Generate videos in minutes.

Cons

- Less control over animation details compared to Fabric or custom workflows.

- Branding limitations on free tiers. Watermarks appear unless you upgrade.

Best for: Educators and marketers creating low-effort, scalable avatar content without heavy technical investment. Also works well for startups experimenting with AI video.

For a deeper breakdown of how these models compare on speed, pricing, and realism against competitors like Kling V2 Pro, HeyGen, and Hedra, see our full guide: Best Lip-Sync API for AI Video: How VEED Fabric 1.0 Compares to the World's Top Models (2026).

Common misconceptions about AI lip syncing

AI lip syncing isn't perfect… yet. While today's AI lip-sync tools deliver impressive results, you may still need to review the outputs and make minor adjustments. The technology excels at creating believable, expressive lip movements, but edge cases (like overlapping dialogue or extreme camera angles) can occasionally trip it up.

It's not just for avatars. AI lip syncing works for real people, dubbed footage, and localization projects. Whether you're creating an AI avatar lip-sync video or syncing audio to your CEO's existing footage, the technology adapts to your content type.

Why AI lip syncing matters now

As demand for multilingual, short-form, and scalable video grows, creators and companies need faster ways to produce synchronized content. Video production timelines are shrinking. Audiences expect polished, professional videos across every platform, from TikTok to LinkedIn to Instagram Stories.

AI removes the manual bottleneck and makes video lip sync scalable, primarily through APIs and auto-generated video platforms. Instead of hiring animators or spending days in post-production, you can generate talking head videos in minutes.

AI lip syncing vs manual lip syncing

AI lip-syncing automates the process using machine learning, dramatically reducing time and human labor. Upload your audio, generate your video, and get results in under 10 minutes. Meanwhile, manual lip syncing involves frame-by-frame adjustments by an animator or editor. It's more precise for complex animation work, but extremely time-consuming and expensive for most content creators.

Why use an AI lip sync video tool

- Saves hours of manual editing or animation. What usedto take 8+ hours per video now takes 5 to 10 minutes, and that time savingcompounds across dozens or hundreds of videos.

- Enables scalable content creation for social media,education, or marketing. Run A/B tests with different spokespersons, createpersonalized video messages, or spin up localized versions without reshooting.

- Supports translation and localization workflows throughdubbing. Replace the audio track in any language while keeping lip movementssynced.

- Enhances accessibility with automated avatar or dubbed narration, makingcontent more inclusive across language and ability

AI lip syncing best practices

Advanced practitioners focus on aligning technical precision with emotional realism. The best AI lip sync workflows incorporate pacing, tone, context, and platform strategy to maximize viewer engagement and authenticity.

Here's how to make lip sync videos that actually perform.

1. Apply emotion tagging strategically

Use Fabric's emotional style tags (like "serious," "excited," "contemplative") based on your script's tone. Matching energy between voice and avatar makes outputs feel more human.

Pro tip: Run A/B tests with different emotion tags on the same audio and compare viewer retention. You'll quickly learn which emotional tones resonate with your audience.

2. Break long scripts into shorter dialogue blocks

Instead of uploading long monologues, segment your dialogue into digestible clips. This improves timing accuracy and makes edits easier. Short clips are also more shareable on social media. Try to keep each segment under 45 seconds for social video formats. Anything longer than that risks a drop-off.

3. Optimize inputs for style and sync

Design your prompts or image uploads with platform aesthetics in mind. Use anime-style avatars for younger audiences on TikTok. Use realistic avatars for LinkedIn or training videos. Match the visual style to where your content will live.

Use Fabric with VEED's voice changer and subtitle generator for complete control over your output.

4. Automate at scale using the API

Integrate Fabric or the Lip Sync API into your CMS or video production workflow to automatically generate hundreds of localized or dynamic videos at scale. This is ideal for e-learning companies or SaaS teams translating training content into multiple languages, so you don’t need to reshoot each version.

Simply dub the audio, and the system will sync lips seamlessly, allowing you to create a single video shoot and produce over 10 perfectly lip-synced language versions.

5. Track engagement metrics post-publication

Measure performance across click-through rate, watch time, and share rate for videos using lip-synced avatars versus static or traditional formats.

KPI targets to aim for:

- 60% video completion rate for social platforms

- 15% increase in multilingual engagement for localized campaigns

- 80% reduction in production time with API automation

Test, measure, iterate. The data will tell you what's working.

Final frame: Make your videos speak

Creating great lip sync is about believability. With VEED’s Fabric and Lip Sync API, anyone can now create realistic, expressive, and synchronized videos at scale. Whether you’re building personalized content, translating for global audiences, or turning avatars into brand reps, these tools give you the flexibility and quality you need.

Ready to make your content speak? Start with Fabric or Lip Sync API and let your voice move your visuals.